How to Implement Internal Linking for SEO (Step-by-Step)

How much does your company spend acquiring high-quality backlinks? By “acquiring,” I don’t mean buying links, which is a big no-no in Google’s view. I’m talking about the money it costs to develop high-quality content, pitch it to relevant sites, and get backlinks pointing to your pages.

If you do the math, you are likely to spend anywhere from $100 to $1,000+ to get one backlink. The value they get is undoubtedly high, but is it worth it? For the most part, it is, but when it comes to link building, you’re leaving a lot out by ignoring your internal links.

Using internal linking for your SEO strategy is a low-hanging fruit that will help you get more authority to your most important pages and rank them faster without spending a dime.

In this post, you will learn everything you need to know about using internal links for your link-building strategy.

TABLE OF CONTENTS:

What Are Internal Links?

Internal links are links that direct visitors from one page of your website to a different page within the same site. For example, this link to our homepage is an internal link.

Simple, right? 😉

Although they seem to play a small part within an SEO strategy, marketers use internal links to establish a site’s architecture and distribute its authority (or “link equity” or “link value” — more on this later). From this architecture, internal links affect SEO by allowing both human visitors and search engine bots (inhuman visitors, perhaps? 🤖 ) to find information fast and efficiently.

The look of an internal link will depend on a website’s design, which is defined by its CSS. Most often, they look like a regular piece of text that is underlined and a different color, like you can see in this case.

On the other hand, the anatomy of an internal link looks like this:

<a href=“https://www.domain.com“ >Anchor Text<a>

The part that’s inside the href attribute is the destination of your internal link, while the text in between the enclosed “a” tag is the text that your users (both human and robotic) will see — marketers call this the “anchor text.”

Google uses anchor text to make sense of a page’s content when they crawl it. They gather all the anchor texts a page receives from external and internal sources, and they store them in their index. Although anchor text is only one of the 200 factors they use to rank pages, it’s an essential one you must consider when defining your internal linking SEO strategy.

Related Content:

* The Marketer’s Guide to Link Building

* How – and Why – to Build a Backlink Portfolio

* 10 Most Important Google Ranking Factors (& How to Optimize for Them!)

What Is Internal Link Building?

Internal link-building is the strategic approach to using internal linking for your SEO goals.

This definition alone doesn’t explain much about why it matters, because to understand its importance, we need to take a quick detour on how Google analyzes what pages to rank for any given user search query, a.k.a. “keywords.”

As we said, Google has over 200 ranking factors, one of them being PageRank (named in honor of Google’s founder Larry Page), a metric score comprised of the number and quality of links pointing to a page from all its sources (internal and external links).

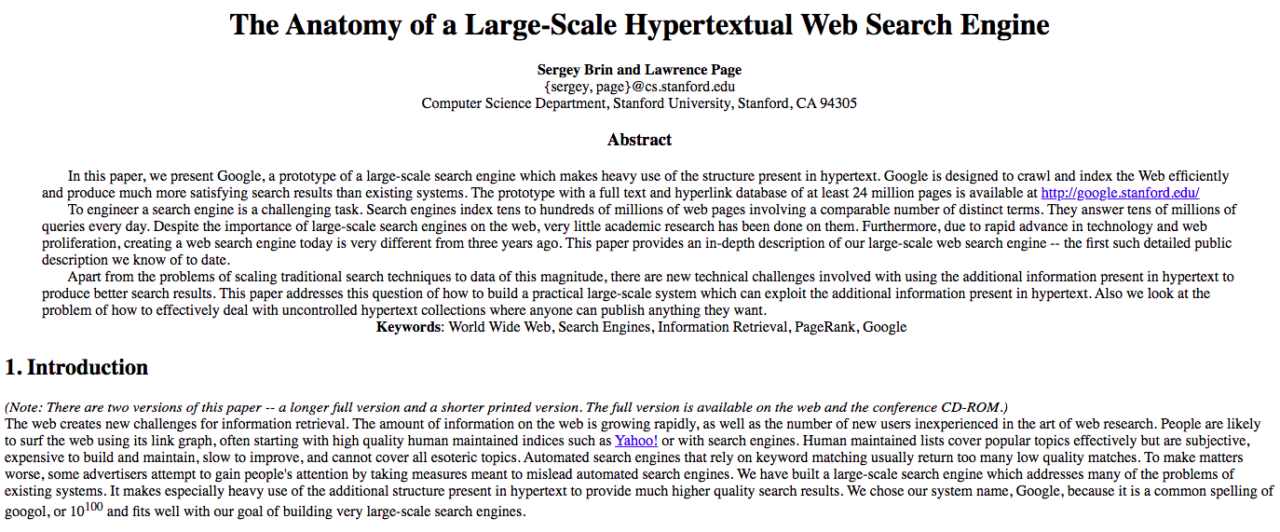

PageRank was one of the core tenets in Larry Page and Sergey Brin’s original Ph.D paper, which they eventually used to start and differentiate Google from all its early competitors (none of which you probably remember):

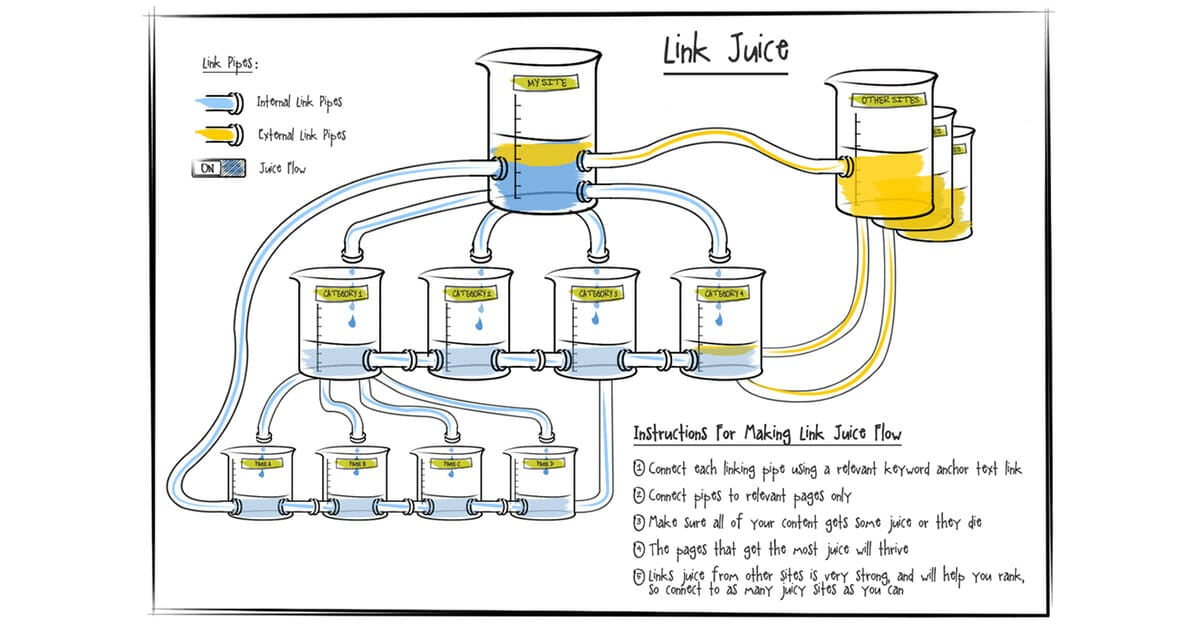

All the links a page receives define its “profile.” From it, Google draws conclusions about its relevance and importance when ranking it for a search query. Whenever a page links to another one (internal or external links), the link passes what SEO managers call “link juice” or “link equity.”

The more links a page receives, and the higher the relevance of the links that point to it, the more link juice it receives and thus the higher its PageRank (or “PR”) becomes.

And the higher a page’s PR, the more likely it will rank for any given keyword.

Link juice is limited. How limited it is and how much a page passes when it links to another one is a matter of heated discussions within the SEO community. However, this explains why SEO marketers are always looking to build backlinks from high-authority (high-PR) sites and pages:

Looking at backlinks alone for a source of link juice is naïve.

You can also take your site’s PR and distribute it to boost your internal web pages’ authority. One way to do this is by taking your highest PR pages and using them to link to your lowest PR pages — as long as the latter has any value to your business. For example, if a feature page from your SaaS site has low PR, you want to get links to it; the same can’t be said for your privacy policy page.

At the end of the day, you need to remember two crucial points for the right internal linking strategy:

- Every page from your site should link to every other one.

- Prioritize linking to any page that makes you money, such as a feature page or a product page.

Book My Free Marketing Consultation

3 Types of Internal Links to Use in Your SEO Strategy

Before we get into the nitty-gritty of an internal link-building strategy, we need to quickly explain the three types of internal links that exist, which will help you plan your link-building tactics better.

1) Contextual Links

Contextual links are the links you add from within your website. They expand the quality of your content (the one FROM which you link) and they give meaning to the page you link out TO.

They are mostly used to link to your content (e.g., blog posts, videos) and, occasionally, to feature or product pages. If used properly, they can get not only link juice to the linked page but traffic as well.

2) Navigational Links

Navigational links are situated in a navigation bar, whether that’s the menu above the fold, in the sidebar, in the breadcrumbs or in the footer.

They usually link to a site’s core pages, such as the feature or product pages, the category pages, and the company information pages:

Breadcrumbs tell the visitor where they are situated on a site by showing the link hierarchy and allowing them to click back to any of the web pages that show up in the breadcrumbs:

Sidebars are positioned to the left or right on any given page and work like navigation menus:

Footers are like menu navigation bars that show up at the bottom of a page (hence the name), and often contain more links than a site’s main pages as well as popular resources:

In short, navigation links give shape to a website’s structure, boost the user experience and serve the search engine bots.

3) Media

Media links are embedded within an image, graphic, animation or video, and work when someone clicks on the media asset.

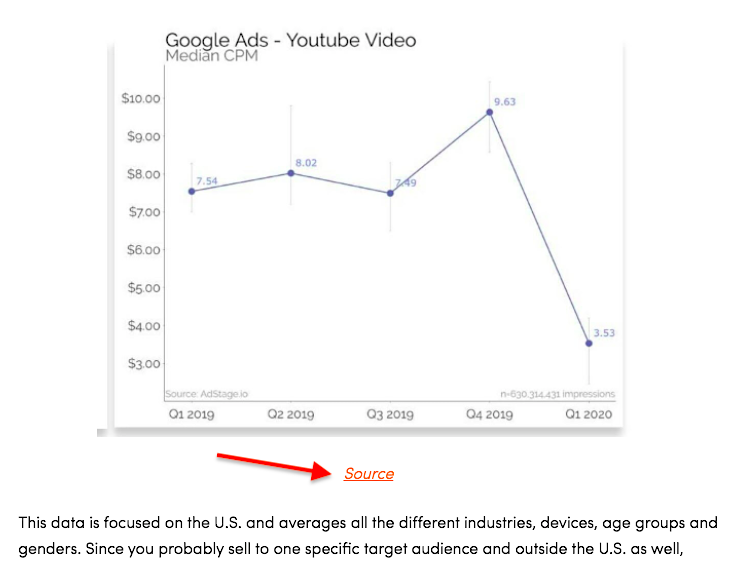

Alternatively, the link could be situated below the media as its source — which you want to use to avoid any copyright infringement issues:

Media links are like contextual links, except that you don’t use text to link to a page, but a media asset like the ones just mentioned. Although they don’t have an anchor text like any other internal link, media assets use an alternative text tag (called “alt tag”) that informs the search engines as to what the content of the image is.

Learn More: Overlooked SEO: Optimizing Images and Video for Search

How to Create an Internal Link-Building Strategy

Now that we’ve learned what internal links are and the three types of internal links, it’s time to go through how to create an internal link-building strategy.

Step #1: Audit Your Site

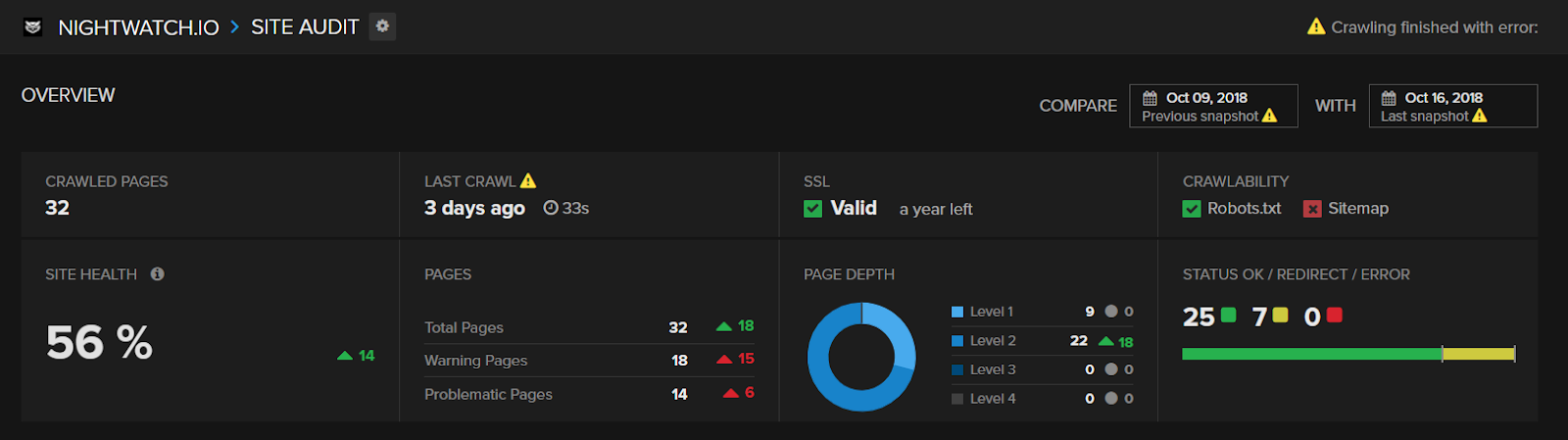

To start, audit your site to uncover how your pages are interconnected — in other words, what your website structure is:

For your site audit, you’ll need the following information, which you will use in the next steps:

- Average click depth: The number of clicks it takes to reach a page starting from the home page.

- Number of inbound links each page receives: This can help you see which pages should be the ones linking out, and which ones should be receiving more links.

- Number of internal links each page receives: Same as above, but for internal links.

- Page authority: Each SEO tool has its own measurement of page authority (Page Rating, Page Authority, etc.), as well as the PageRank mentioned before. No metric is perfect, so take the metric your tool uses as a benchmark.

- Page status: Check if your pages are live (2xx), redirected (3xx), or broken (4xx).

Source

Some of the most popular SEO tools you can use in this step include:

- Screaming Frog

- Ahrefs

- Nightwatch (seen in the image above)

- Semrush

- DeepCrawl

Whatever tool you have chosen, get your site audit done, export the data, clean it to keep the attributes mentioned above, and have all your internal links organized by their click depth. This will allow you to start from the first page (your home page), which is usually the most authoritative page in any site, and work your way back to the most remote and least authoritative pages.

Dive Deeper:

* How to Perform an SEO Audit for Your Website

* How to Improve Your Website Structure to Boost Your Profits

* How (and Why) to Create an Effective Content Structure for Better Ranking

Step #2: Find Link-Building Opportunities

With your site audit done, you can start digging in the data to find any of the following internal linking opportunities:

- Broken links: Pages can break easily without your awareness. This is especially true for sites with thousands of pages and with dynamic URLs. Find any page that has a 4xx HTTP status code and mark it to fix it (either delete them, bring the URL back to life, or redirect it).

- Old/inactive pages: You may want to delete pages that are irrelevant or not used anymore. Remember, you want to squeeze as much link equity as possible from them, so any page that’s not being used should go.

- Redirected pages: Pages that are currently redirected and that receive internal links should have their internal links fixed so the latter point to the page you want to rank for.

- High/low-authority pages: You want to funnel your link equity from your high-authority pages to your low-authority ones (as long as it makes business sense).

- Low-value pages: Pages that have no value to your business (no traffic, no leads, no sales) can be deindexed from Google to maximize your link value.

- Orphan pages: Make sure all your pages receive internal links or else they will be “orphaned.” Even if those pages have no business value, you should still link to them. If you want to deindex them, you can do so with a “noindex” tag.

You should be able to find each of these link-building opportunities from your audit. If you can’t figure out whether pages are redirected or they’re orphan pages, you may have to re-do your audit, although if the issues only happen for a few URLs, you can check them manually.

Define all the URLs you need to work on so you, or someone from your team, can work on them in the next step.

Book My Free Marketing Consultation

Step #3: Add the Internal Links

Adding the internal links is as simple as taking one of the URLs you defined in the previous step and inserting it using your CMS (if you somehow don’t use one, you can always use the HTML tags shown earlier).

Creating an internal link isn’t the real issue here. There are three key issues we must cover to solidify your internal linking strategy.

Key Issue #1: The first one is about the number of internal links to create. As a principle, every indexable page should have at least one internal link. But when it comes to ranking pages that your business depends on, you want to make the most out of your link equity.

To solve this issue, you need to take into consideration your:

- Keyword’s competitiveness

- Domain authority

- Page authority

- Link profile

The higher the competitiveness for each keyword you want a page to rank for, the more internal links you need to create. However, this ignores your link value which, as we mentioned, should flow from the most authoritative pages to the ones you want to rank for.

Key Issue #2: This leads us to the second point, which focuses on the pages on which you will create the internal links. Since your link equity is limited, you need to funnel it strategically. Quality beats quantity: a few internal links from your most powerful pages will be better than creating hundreds of links from weak ones.

One useful approach is to create a few internal links from authoritative pages to your target pages and mix it with links from other pages regardless of their value. You can also add internal links whenever it’s needed to provide a positive user experience.

Key Issue #3: Finally, you need to use an anchor text that is relevant to the keyword you want each page to rank for. The industry standard says that you should use the keyword as the anchor text. Whether you should use the exact match, phrase match, or broad match is a lengthy discussion we can’t cover now, but for the most part, you should err on the side of caution.

Using too many exact match anchor text, either from internal or external links, is a warning sign for Google. After all, there’s little chance that people will magically link to a page always using the same phrase (this doesn’t apply for brand or product names).

Remember this rule: make your anchor text look natural. Sure, add your keyword, but do it in a way that doesn’t look like you are trying to trick Google.

One final note before we move on: Maximize your link value by adding the “nofollow” tag to your low-priority pages. By telling Googlebot not to follow a certain link, it removes it from its link equity profile, thus boosting the page’s authority.

Step #4: Scale Your Internal Link Building

A one-off website audit and internal link-building optimization project is great. You can also add internal links manually to any new content in order to link to pages that mention the new web page’s keyword or topic. However, both are not efficient in the long term.

Scale your internal linking efforts by making it so that every time you create new content, you create internal links to the pages you want to rank for. Five ways you can do so include:

- Breadcrumbs: As we explained, breadcrumbs create internal links to all the preceding pages from whichever page the visitor is on.

- Sitemaps: This file provides a list of all the pages and media assets your site has. It helps with crawling and indexing.

- Recommended Reading/Most Popular Posts: Besides linking to relevant and popular content pieces, you can extend the time on site and drive more traffic to the recommended pieces.

- Upselling and CROss-selling: This works like the previous tip but for e-commerce sites. You can also generate more sales and increase your average order value.

- Category/Tags Pages: These pages will boost your usability and internal links automatically based on your page’s category and tags.

Related Reading:

* 5 Ways to Improve Your SEO without Building Links

* The Ultimate Guide to Link Building with Content for SEO

Conclusion

By now, you know that using a robust internal linking structure is a simple and easy way to give your SEO strategy an extra kick. Now that you understand how it works and how to implement it, you can get started today!

- Start with the audit, defining the link opportunities as you go through.

- Create the links, taking into consideration the link equity and the anchor text.

- Finally, scale your efforts using breadcrumbs, recommended pages, and sitemaps, among others.

Before you know it, your site will see a nice increase in organic traffic without having created any backlinks. Who said link building was hard? 🤷🏽

Hopefully you learned how to build internal links for SEO! But if you just want an expert SEO agency to do it for you, click here.

Frequently Asked Questions (FAQs)

How do I create an internal link?

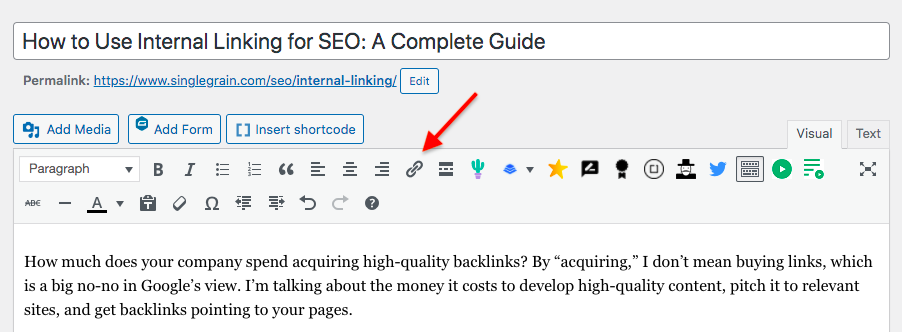

Every CMS, such as WordPress, has a link button on the menu that you can click after you have highlighted the anchor text you want to link from:

You can also highlight your anchor text and then hit Command + K on your Mac’s keyboard or Ctrl+K on your PC’s keyboard and then paste in the link when the link box pops up.

If you want to take the manual route, you need to add the anchor tag like so:

<a href=“https://www.domain.com“ >Anchor Text<a>

Add your destination URL within the href quotes and your anchor text between the anchor tags.

What are the 3 types of internal links?

- Contextual: This when you link to a second page within the first page’s content, such as a blog post.

- Navigational: This includes any link in your menu bar, sidebar or footer.

- Media: This is any link embedded in an image, graphic or video.

How many internal links per page is recommended?

There are no hard rules. The more a page links out to other ones, whether internal or externally, the more it will dilute its link value. The same applies to your user experience: too many links will confuse the visitor.

Still, it will depend on the page and website — linking to 200 URLs from the home page is not the same as from a 500-word blog post. If a page looks too crowded with links, then that’s too many.