AI SEO Metrics for Generative Search Success in 2025

AI SEO metrics are the new scorecard for visibility in generative search, where answer engines summarize, cite, and direct attention before a single click. If you’re still judging success by blue-link rankings and raw organic sessions alone, you’re missing the invisible influence of AI overviews, knowledge panels, and zero-click answers that shape demand upstream.

This guide maps the measurement shift step by step: the specific visibility and authority signals that matter, how to instrument them, how to benchmark them, and how to turn them into narratives your leadership team will trust. You’ll get a practical framework, a stack blueprint, an executive-ready dashboard plan, and a 90-day action playbook—so your team can quantify real impact in the generative AI era.

TABLE OF CONTENTS:

Why your legacy SEO dashboard breaks in generative SERPs

Generative search blends answers, citations, and follow-up prompts at the top of results, compressing classic SERP real estate and shifting the user journey. Visibility often occurs without a visit: users read a synthesized answer, absorb source mentions, and later return via branded search, social, or direct navigation.

That makes traditional web KPIs lagging and incomplete. Rankings fluctuate by answer variant and by which sources an AI overview cites at any given time. Impressions can be meaningful even when no click happens, and authority accrues to entities, not just pages, as LLMs build topic graphs from multiple sources.

In this world, treating “traffic” as the only evidence of success hides upstream wins, like earning a persistent citation in a high-value AI panel or expanding your presence across intent-refining prompts. Measurement must move from page-level positions to entity-level coverage and from single-click attribution to multi-surface influence.

From clicks to coverage: Visibility without visits

Three realities drive the shift. First, zero-click surfaces now mediate discovery, so coverage and citations matter as much as CTR. Second, SERP volatility spikes when AI panels reshuffle sources, so timing and update cadence become strategic. Third, entity authority determines whether your brand is eligible to be retrieved, summarized, and trusted by answer engines.

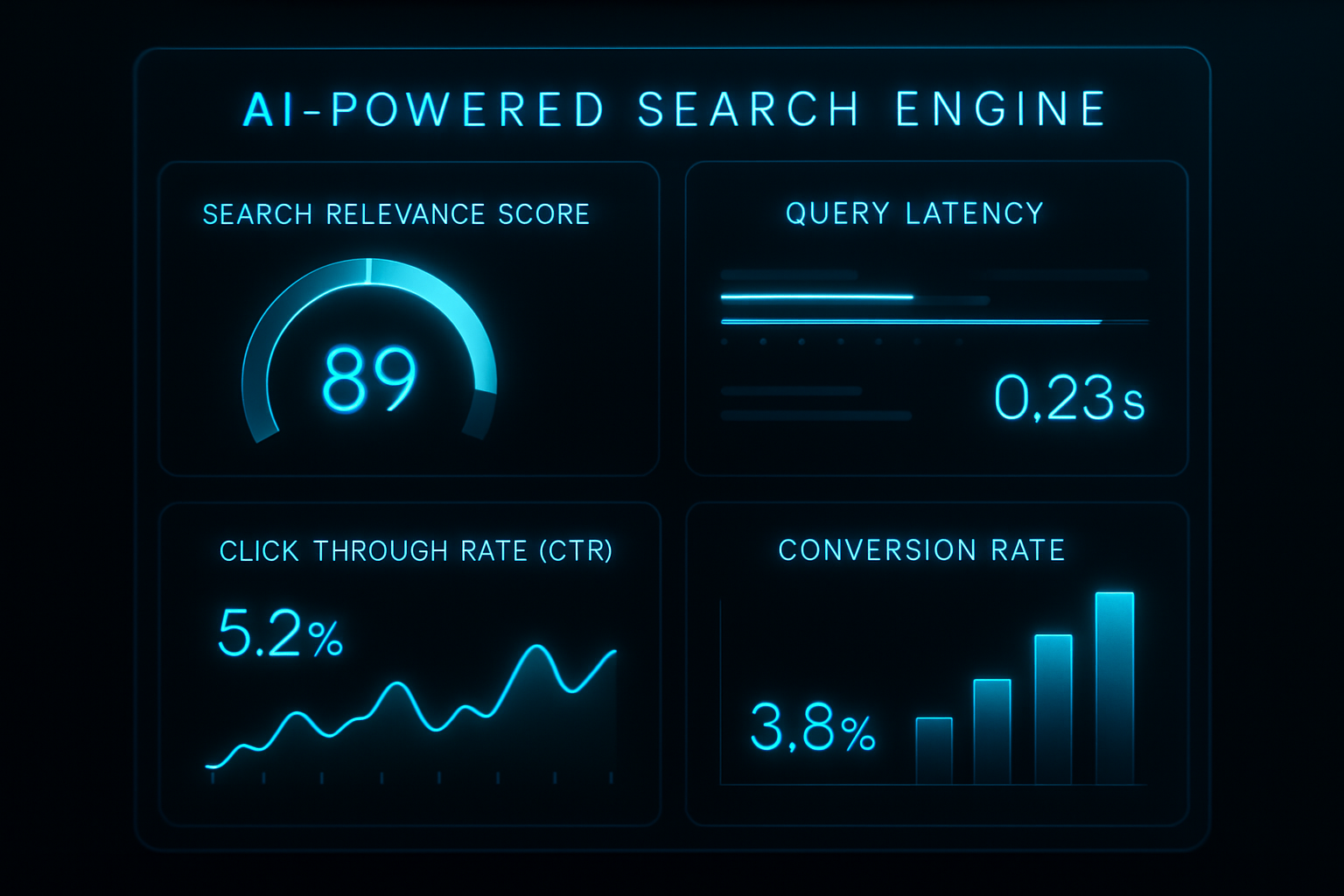

The new scorecard: AI SEO metrics that matter

To measure what actually moves revenue in generative search, build your scorecard around AI SEO metrics that quantify visibility, authority, and demand creation. These KPIs reveal coverage and quality across AI panels, answer engines, and conventional blue-link results without double-counting clicks.

Use the following metric set as your baseline. Finalize definitions with your analytics owner and lock the taxonomy in your BI layer before rollout.

- AI citation count: How often your pages or brand are cited in AI overviews and answer panels for your target queries. Track by query cluster, and segment by commercial value.

- AI share of voice (SOV): Your percentage of total citations across a defined query set versus competitors. Useful as a proxy for market share for generative surfaces.

- Zero-click surface presence: The number and quality of non-click surfaces you occupy (AI panels, knowledge cards, “From the web” modules). Weight by intent and prominence.

- Generative SERP volatility index: A stability score indicating how frequently AI overviews and their citations change within your category. Use as a trigger for content refreshes.

- Entity authority mentions: References to your organization, products, and people across authoritative sources that LLMs likely ingest (news, high-tier blogs, reputable directories). Combine with schema completeness.

- Topic depth: The breadth of subtopics and supporting queries you cover per intent cluster. Map to a hub-and-spoke model and track gaps over time.

- Chunk retrieval frequency: How often specific content chunks (paragraphs, FAQs) are retrieved during LLM evaluation. Measures semantic accessibility, not just page presence.

- Embedding relevance score: Cosine similarity between your content embeddings and target query embeddings. Monitor drift as queries evolve and update content accordingly.

- Traffic quality: Downstream quality markers of AI-referred traffic, including bounce-back to the SERP, engaged time, and conversion-depth reached.

- Brand demand: Movement in brand search queries and direct visits that can be correlated to increased AI surface coverage, even when clicks do not rise.

- Time-to-insight: Days from SERP change to action. Shorter windows mean faster adaptation to AI panel reshuffles and competitive moves.

Smart Rent used a combination of search everywhere optimization (SEvO), technical SEO, and content restructuring to gain dominance on AI search. They gained 100% more presence on ChatGPT, Perplexity, and Gemini, and 50% more visibility on AI Overviews.

Bierman Autism reframed their technical SEO around AI search, achieving a 100% uplift in Gemini and a 75% increase in AI Overview capture. This also resulted in a 21% spike in keyword growth.

AI SEO metrics to monitor weekly

Adopt a simple cadence, so your team acts on signal, not noise. Daily monitoring should focus on generative SERP volatility thresholds and critical citation changes for your top 25 revenue-driving queries.

Weekly reviews should assess AI Citation Count, AI SOV, and newly opened or closed topical gaps. Treat any double-digit SOV swing or loss of citation on high-intent terms as a sprint trigger for content updates.

Monthly reviews should tie AI surfaces to demand: brand query growth, assisted conversions, and funnel velocity for AI-exposed cohorts. Keep executive views high-level and narrative-driven, reserving detailed diagnostics for the operating team.

Build your AI-era measurement stack

The right stack makes AI SEO metrics durable and repeatable. Start by defining authoritative query clusters tied to commercial value, then instrument data capture across both classic and generative surfaces.

Instrumentation, data, and standards

Capture event-level data in GA4 and enrich with server-side logs to identify AI-referred entry points and bounce-back signals. Add scripts or third-party monitors to track AI overview presence, cited sources, and panel reshuffles across your query clusters.

In your content pipeline, standardize schema (Organization, Product, FAQ, HowTo, Author) and maintain consistent entity references. Use chunking and embeddings so key passages are retrievable and semantically aligned with intent variants.

Operationalizing generative visibility

Visibility in answer engines is a content and structure problem, not a rank problem. Map intent hierarchies, then prioritize page improvements that elevate entities, clarify definitions, and address follow-up prompts explicitly.

When your team is ready to optimize for AI summaries and answer panels, align production with modern practices in generative engine optimization. For a practical blueprint on what to change in content and markup, study how to optimize content for AI search with generative engine SEO.

Tools and automation

Adopt an internal vector index for priority content so your team can score embedding relevance and simulate retrieval. Connect these signals to your BI layer to highlight drift and automatically trigger refreshes.

When choosing vendors, align capabilities with your KPI stack: citation tracking, volatility detection, entity mapping, and content-gap scoring. A concise comparison helps; shortlist partners using a 2025 ranking of generative AI SEO services, and pressure-test feature claims against your measurement plan.

Agencies and in-house teams evaluating platforms should balance accuracy, data access, and workflow fit; this guide to evaluate AI SEO tools outlines criteria to separate helpful automation from vanity features.

Tie it together with gap analysis

AI-era visibility is won by finding and filling topic gaps faster than competitors. Platforms that continuously analyze competitor coverage, flag missing subtopics, and draft semantically aligned content shorten the time from insight to publication.

One option that fits this “detect → draft → iterate” loop is Clickflow, where advanced AI analyzes your competition, identifies content gaps, and creates strategically positioned content that’s designed to outperform incumbents across AI panels and classic results.

If you’re formalizing this program across multiple channels and need senior support for stack design, collaborating with an expert SEVO/AEO partner can accelerate results. See how established teams structure metrics and governance in this walkthrough of how AI marketing agencies measure campaign success.

Get a FREE consultation to design an end-to-end measurement architecture, connect AI SEO metrics to revenue, and operationalize faster content iterations with clear executive reporting.

Benchmarking and reporting leaders will believe

Executives want a clean narrative: What changed in the market, how we’re visible in AI surfaces, and how that visibility translates into demand and revenue. Design a dashboard that ladders from category shifts to financial outcomes, with layered detail for analysts.

Executive dashboard architecture

Structure the view top-down. Begin with category-level generative volatility and AI SOV trends. Show your AI Citation Count and Zero-Click Presence on revenue-weighted query clusters. Close with brand demand growth, assisted conversions, and pipeline movements.

Below the executive tiles, expose diagnostics: entity authority lift, coverage depth by topic, and chunk retrieval performance for priority pages. Tag every widget to a specific owner and a clear action path so accountability is obvious.

| Measurement Category | Traditional KPI | AI-Era KPI | Why It Matters Now |

|---|---|---|---|

| Visibility | Average Rank | AI Citation Count, AI SOV | Answer engines gate discovery, and citations drive consideration before clicks. |

| Engagement | CTR, Bounce Rate | Zero-Click Surface Presence, Bounce-back-to-Google | Quality is evidenced by engagement with AI-referred sessions and reduced pogo-sticking. |

| Authority | Domain Authority proxies | Entity Authority Mentions, Schema Completeness | Entities, not pages, determine eligibility for inclusion in AI overviews. |

| Resilience | Position Stability | Generative SERP Volatility Index | High volatility demands agile refreshes timed to panel reshuffles. |

| Relevance | Keyword Coverage | Topical Coverage Depth, Embedding Relevance | Semantic breadth and closeness to intent variants influence retrieval. |

| Revenue | Last-click Conversions | Brand Demand Growth, Assisted Conversion Value | Zero-click impressions influence later demand; attribution must reflect it. |

When reporting to finance, anchor the story in category dynamics and controls you own: volatility conditions, your AI SOV trajectory, and the conversion impact of cohorts that encountered AI panels featuring your brand. For broader operationalization, explore disciplined measurement practices adopted by sophisticated teams in guides on AI-driven campaign performance.

Turning insights into sprints

Build response playbooks linked to thresholds. If AI SOV drops by more than five points on a priority cluster or a top revenue query loses a citation, auto-create a refresh sprint with owners, brief, and due dates.

Conversely, when volatility spikes above your category baseline, push your most eligible pages through a quick semantic and structural update play to defend or expand coverage. Integrate these automations with your content system to compress “insight-to-publish” time.

A 90-day action plan to operationalize AI SEO metrics

Put the framework to work with a concise rollout plan. Assign cross-functional owners early and create a single BI view everyone trusts.

Phase 1 (Days 1–30): Lay foundations

- Define revenue-weighted query clusters and map target AI panels for each.

- Implement monitoring for AI overview presence, cited sources, and generative volatility across clusters.

- Standardize schema on priority pages and align entity references across your site and profiles.

- Stand up baseline dashboards for AI Citation Count, AI SOV, Zero-Click Presence, and brand demand.

Phase 2 (Days 31–60): Ship quick wins

- Run two “gap sprints” to fill missing subtopics in top clusters; measure lift in AI SOV and citations.

- Refresh 10–15 high-impact pages to embed relevance, improve chunk structure, and ensure FAQ coverage.

- Introduce volatility-triggered refresh policies and document thresholds visible to stakeholders.

- Tie AI-exposed cohorts to funnel metrics and annotate changes in your BI tool.

Phase 3 (Days 61–90): Scale and automate

- Operationalize a weekly gap-detection → content brief → publish cycle with clear SLAs.

- Embed AI SEO metrics into marketing QBRs and connect them to pipeline targets.

- Automate alerts for lost citations, SOV swings, and volatility peaks with preassigned owners.

- Expand programmatic coverage for long-tail supports where generative panels open new surface area.

If your team wants to compress Phase 1–2 timelines, using an AI platform that continuously scans competitors, highlights missing angles, and drafts content can shorten cycle time. A pragmatic option is Clickflow, which aligns gap analysis and creation so your AI-era coverage improves faster without adding headcount.

As you scale production, connect automation with your content ops. For templates, governance, and role definitions that reduce friction from insight to publish, this guide to end-to-end automation in SEO outlines practices that keep throughput high without sacrificing quality.

Turn AI SEO metrics into revenue results

Generative search rewards brands that measure what users actually see, cite, and remember. When you elevate AI SEO metrics—citations, AI SOV, volatility, entity authority, and demand lift—your team stops arguing about clicks and starts influencing the narrative that drives revenue.

If you need a partner to integrate this KPI stack with your analytics, connect dashboards to revenue, and build content that earns reliable AI coverage, get a FREE consultation. We’ll help you align strategy, tools, and execution so your investment in AI visibility produces measurable growth—quarter after quarter.

Related Video

Frequently Asked Questions

-

How do I set realistic targets for AI-era SEO when there’s no historical baseline?

Start with a pilot query cluster and calculate targets from your current share versus the top three competitors. Use a rolling median of 4–8 weeks for baseline stability, then ratchet targets quarterly as you expand coverage.

-

What’s the best way to attribute revenue to AI-driven visibility without double-counting?

Log exposures to AI surfaces as their own touchpoint and apply multi-touch modeling that weights view-through interactions separately from clicks. Tag cohorts at first exposure and compare funnel metrics to matched holdouts to validate lift.

-

Which governance and compliance practices should we follow when monitoring AI panels?

Respect robots and terms of service, store only non-PII data, and document capture methods in a data processing register. Vet vendors for SOC 2/ISO 27001, set retention windows, and restrict access via role-based controls.

-

How should global or multilingual brands adapt measurement for different markets?

Build locale-specific entity graphs and track citations from regional authorities, not just global sources. Use multilingual embeddings and country-level dashboards to detect gaps and volatility by language and market.

-

What team capabilities are critical to run an AI-focused SEO measurement program?

Pair an SEO strategist with an analyst who can work with embeddings/NLP, a content lead versed in structured authoring, and a RevOps partner to connect metrics to pipeline. Assign an ‘entity owner’ to maintain schema and knowledge sources.

-

How can we prove causality between our content updates and improvements in AI visibility?

Use staggered rollouts across comparable query clusters and apply difference-in-differences analysis against untouched controls. Pre-register your change windows and compare pre-/post-trends to isolate treatment effects.

-

How do we manage risk from sudden LLM or search engine updates?

Create canary clusters you monitor hourly and set alert thresholds for abrupt shifts. Maintain a change log for your own releases, diversify the content formats and sources cited, and prioritize fast refreshes for high-revenue intents when anomalies appear.